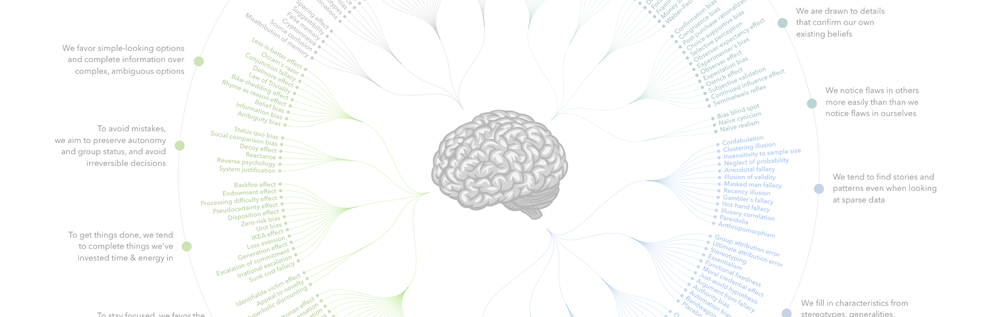

Image by John Manoogian III (Wikimedia)

What is gender bias in NLP?

Oftentimes NLP models are trained on data that has clear gender bias in it. Some examples of the bias may look like as such:

- There are only 2 genders or sexes that are mentioned which suppress lived realities (for example intersex, non-binary and so on),

- There are assumptions about gender on certain professions (for example, while describing doctor using pronoun ‘he’, or while describing nurse using the pronoun ‘she’),

- Poor or lack of definition of gender in the NLP research or NLP bias research. In ‘Theories of “Gender” in NLP Bias Research’ Devinney et al. surveying 176 papers on “gender bias” find that nearly half the surveyed papers do not define what they mean by “gender”, or gender is assumed to be something “everyone knows”. Moreover, most papers only consider men and women when operationalizing gender.

Such bias in NLP can lead to negative consequences. These assumptions render certain groups “unintelligible” and being unintelligible to society and computer systems can have violent consequences. For example: Such bias erase trans, nonbinary, and intersex people, and reduce cis people to stereotypical view. Thus, cannot produce fair NLP.

Bias in NLP causes harm and Hannah described harm in the following ways:

We observe harms of allocation when systems distribute resources (material and non-) in a way that is unfair towards subordinated groups. For example: a website shows STEM job advertisements to men, but not women with the same qualifications/profiles.

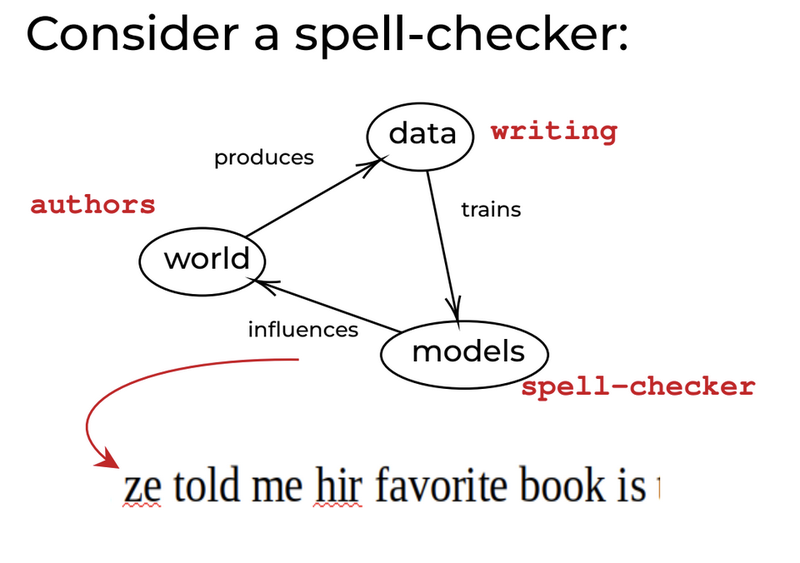

We observe harms of representation when systems reinforce the subordination of already-disadvantaged groups. For example: names associated with Black Americans are given less positive sentiment scores than white names. Or, when the system reinforces the stereotypes which influence people’s biases leading to more bias. For example: In the following figure we can see that biased data produces biased models which influence the world which produces biased data. Hence, the cycle of bias continues.

Hannah gave Adlede an example of a spell checker where gender-neutral pronoun “ze” was not included.

Tools and tips to mitigate bias

The summed-up takeaway from this lecture is that we should be clear and critical about our (working) definitions of our data. For example: to be careful about if the data is biased, or how the data has been collected.

Since we live in a normative society we may not have completely unbiased dataset therefore these are the following 2 approaches (can be blended) that can be taken to mitigate bias in NLP:

- Manipulate Data

- Augment with artificial data (“gender-swapping”)

- Identify and remove “influential” documents

- Annotate with gender

- Manipulate Model

- Fine-tune with similar datasets (counter-biased)

- Embedding ‘debiasing’

- Remove gendered subspace

- Train models to isolate gender in specific dimensions, then ignore those later

- Adjust algorithms (constraints, adversarial learning)

- Generally involves specifying gender as a protected attribute

By Kabir Fahria (They/them or hen)

Developer, Aeterna Labs